mirror of

https://github.com/ciur/papermerge.git

synced 2026-04-25 12:05:58 +03:00

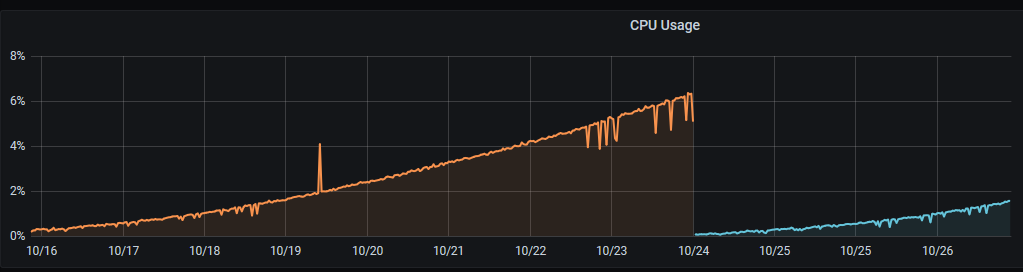

[GH-ISSUE #198] Slow increase in CPU usage over time #162

Labels

No labels

2.1

3.0

3.0.1

3.0.2

3.0.3

3.0.3

3.1

3.2

3.2

3.3

3.5

3.x

Fixed. Waiting for feedback.

Fixed. Waiting for feedback.

UX

Version 2.1 - alpha

XSS

announcement

beta

blocker

bug

cannot reproduce

confirmed

confirmed

critical

demo

dependencies

deployment

detchnical debt

discussion

docker

documentation

donations

duplicate

enhancement

feature request

frontend

fundraising

good first issue

good issue

help wanted

high

implemented

important

improvement

incomplete

invalid

investigation

kubernetes

low

low impact

medium

medium

medium impact

migration from 2.0

migration from 2.1

missing-language

missing-ocr-language

no-activity

note

ocr

outofscope

packaging

performance

popular request

pull-request

pypi

question

raspberry pi

roadmap

search

security

setup

status

task

technical debt

updates

user xp

version 1.4.0 - demo

will be implemented

will not be implemented

wontfix

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/papermerge#162

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @thespad on GitHub (Oct 27, 2020).

Original GitHub issue: https://github.com/ciur/papermerge/issues/198

Originally assigned to: @ciur on GitHub.

Description

Brand new install with half a dozen documents uploaded, no automations in place, CPU usage slowly climbs over time without any changes being made.

Expected

CPU usage should not climb over time.

Actual

Info:

@ciur commented on GitHub (Oct 28, 2020):

@TheSpad, really good catch!

I had this issue in production too, and to be honest, was very annoying :(

The problem is because of "default file based transport of messaging queue" which piles up files in queue folder (in project directory).

You have 2 options:

For latter one, you need to replace following options:

with this ones:

@thespad commented on GitHub (Oct 28, 2020):

Is there any risk of data loss from automating deletion of the queue files?

@ciur commented on GitHub (Oct 28, 2020):

@TheSpad,

If data = your documents, then answer is NO.

Files in queue directory contain information about background jobs. If you delete them once a day (e.g. daily at 00:00) and in same time you start an OCR on document it might happen that you might delete that ocr job - means your document will not be OCRed because background job was accidentally deleted - document itself won't be affected at all.

My suggestion is to use redis (with configurations I gave above).

The "filesystem://" is good for small deployments (development env, testing, evaluation) because of its simplicity (no extra things to include).

@alex-phillips commented on GitHub (Oct 28, 2020):

@ciur would it be safe to run something like a cron job that deletes anything in that folder older than a certain time? Or would that still run the risk of lost OCR jobs?

@ciur commented on GitHub (Oct 28, 2020):

@alex-phillips absolutely!

Deleting any file (in queue directory) older than 24 hours is safe - it will not affect any background job.

@maspiter commented on GitHub (Nov 6, 2020):

Is Redis support still in development because I see django development configuration?

@amo13 commented on GitHub (Nov 10, 2020):

I don't see where to adjust to configuration to use redis. There is no

CELERY_BROKER_URL = "filesystem://"in thepapermerge.conf.pytemplate configuration which I could replace with the redis.@maspiter commented on GitHub (Nov 10, 2020):

It is in base.py

@amo13 commented on GitHub (Nov 10, 2020):

I can find it in this repo, but I can't find it on my filesystem after after installation... maybe it is due to my packaging attempt on archlinux.

Can you please tell me the relative path of base.py to the papermerge install folder containing core, search, test on your system? Is it actually just `../config/settings/base.py?

Where is the file to be used defined and can I change its location?

@maspiter commented on GitHub (Nov 10, 2020):

Could you elaborate what the problem is exactly?

The path is papermerge/config/settings and that is were it should be on install.

@amo13 commented on GitHub (Nov 10, 2020):

Ah, ok, now I have it and it seems to be working fine.

I install papermerge without virtual env and without pip but only with the package manager of arch linux. All dependencies are installed as individual packages which has the advantage of having them all updated together with system updates.

Thanks for the help by the way 👍

@amo13 commented on GitHub (Nov 10, 2020):

To my understanding, the suggested replacement of lines to use redis instead of filesystem would have to be repeated after every update, because the base.py file itself might get an update and would in turn replace the modified one from the previous version.

Is there a way to circumvent this?

@hactar commented on GitHub (Dec 20, 2020):

So after having my papermerge instance running for a few months now, the cpu usage of an idle papermerge was at 97% - only after researching this I found this ticket. Information on this needs to be included in the documentation for docker, or even better, docker-compose/build needs to be adjusted to either use redis per default, or have a built in cronjob that deletes these files. Multiple people have already fallen into this hole, see issues referencing this one.

This needs a default docker fix please @ciur , I suggest reopening this issue.

@okoetter commented on GitHub (Dec 23, 2020):

I second this. My CPU usage was >50% until I found this thread.

@ciur commented on GitHub (Dec 23, 2020):

ok, guys, I reopened the issue and I will update documentation + update document files

@jorisvc commented on GitHub (Dec 27, 2020):

Don't forget to install the REDIS server.

On Debian this is

Then restart your papermerge worker service.

@ciur commented on GitHub (Dec 27, 2020):

@hactar, @okoetter, @amo13, @TheSpad,

I changed official docker compose file to use redis as message broker instead of filesystem based one.

Here is the diff.

And here is documentation update which describes how and why to configure redis as broker/message queue.

I also added an entry to know issues which points in its turn the configuration documentation.

@Haymotion commented on GitHub (Apr 19, 2023):

You must apply the NEW CELERY Code on then papermerge.backend or the papermerge.worker ? or the twice docker ?

and you say on option si to regularly delete all files in queue folder (this folder is created automatically in same directory where manage.py file is). But what is the default name of thos Queue Folder ?

Thanks a lot for your response