mirror of

https://github.com/RD17/ambar.git

synced 2026-04-25 15:35:49 +03:00

[GH-ISSUE #147] Cannot view/download files #147

Labels

No labels

$$ Paid Support

bug

bug

enhancement

help wanted

invalid

pull-request

question

question

wontfix

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/ambar#147

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @dandantheflyingman on GitHub (Apr 19, 2018).

Original GitHub issue: https://github.com/RD17/ambar/issues/147

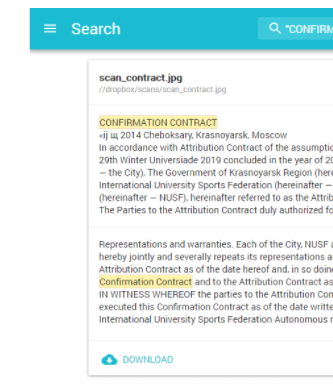

Hi,

I am struggling to understand how to access my files from the Web interface?

Is there meant to be a download button? I can find the image preview, but that is it..

@dandantheflyingman commented on GitHub (Apr 19, 2018):

Guess this is what i am looking for:

This button --^

Have i got a configuration error?

My install is a clean install on Ubuntu 16.04 using the new 2.0.0rc using the docker-compose.yml 2.0.0rc example.

I have tried ingesting the documents via upload and through a crawler. both times the documents seem to be successfully analysed and added, but I cannot find a way to view/download them?

@sochix commented on GitHub (Apr 19, 2018):

Hi @dandantheflyingman it's a bug in rc, we'll fix it

@dandantheflyingman commented on GitHub (Apr 19, 2018):

Awesome thanks! I was just concerned that i had something misconfigured.

@andrea-ligios commented on GitHub (May 2, 2018):

I guess turning

preserveOriginalstotrueby default would solve the problem.I'm quite curious about the way this will get fixed.

@sochix commented on GitHub (May 3, 2018):

@andrea-ligios nope, it won't solve the problem

@andrea-ligios commented on GitHub (May 3, 2018):

@sochix , first of all, thank you for answering.

Just to be sure to understand what's happening here (I'm new to Ambar):

If I'm not mistaken:

1.3.0, there was a script calledambar.pyand a file calledconfig.jsonwhich have now been deprecated in favor of a full docker-compose installation.preserveOriginalswas a parameter in theconfig.jsonfile used to specify whether or not to save a binary copy of the file in MongoDB (in addition to the data extracted from it and stored in Elasticsearch).My questions:

config.jsongone in2.0.0rc?If it's not available anymore, where can we specify whether to preserve or not the originals?

I mean, what should we be able to download, if the original file is gone? Are you referring to the other missing button, "TEXT"?

P.S: your product is really cool, thank you very much for releasing it!

@sochix commented on GitHub (May 3, 2018):

Now all config goes from docker-compose file throught the env vars. In 2.0.0rc we accidentally removed 'preserveOriginals' option.

In the next release we will fix the issue with stroing original files. We plan to download it from local crawler ( not MongoDB). The TEXT button removed completely.

@andrea-ligios commented on GitHub (May 10, 2018):

@sochix : sorry to bother you, but is there any public roadmap we can look at?

Otherwise, do you have some (coarse-grained) time window for the next release?

Thanks in advance,

Cheers

@sochix commented on GitHub (May 10, 2018):

We are open source and Ambar is not our full-time job.

But, we received sponosrship from IFIC.co.uk last week, so the next release will be at the end of the next week.

@andrea-ligios commented on GitHub (May 10, 2018):

Great news! Thanks for your answer

@denis1482 commented on GitHub (May 16, 2018):

Fixed in 2.1.8

@andrea-ligios commented on GitHub (May 16, 2018):

Thank you @sochix , I've tested it this morning and it works like this:

Have I got everything correct?

Again, great job 👍

@sochix commented on GitHub (May 17, 2018):

@andrea-ligios

Maybe you can help us with documenting Ambar?

@andrea-ligios commented on GitHub (May 21, 2018):

@sochix I need to know it myself, first;

as soon as I'll go deeper, though, and assuming to find some free time, I could help.

Just tell me what you had in mind.

Cheers

@sochix commented on GitHub (May 22, 2018):

@andrea-ligios for example, you can help us filling up FAQ on front page