mirror of

https://github.com/s3fs-fuse/s3fs-fuse.git

synced 2026-04-25 05:16:00 +03:00

[GH-ISSUE #1740] s3fs-fuse和nfs V3,The client failed to write files into the S3bucket. #894

Labels

No labels

bug

bug

dataloss

duplicate

enhancement

feature request

help wanted

invalid

need info

performance

pull-request

question

question

testing

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/s3fs-fuse#894

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @shuangzai21 on GitHub (Aug 11, 2021).

Original GitHub issue: https://github.com/s3fs-fuse/s3fs-fuse/issues/1740

When I connect to the server using NFs3 from the client, and the server connects to the bucket through S3FS-FUSE, the files that the client writes to the bucket are always incomplete, and the uploaded files are different from the cached files. Can anyone solve this problem?

@VVoidV commented on GitHub (Aug 16, 2021):

what is your kernel version? I upgrade my kernel to 5.4.135, file can be uploaded correctly.

On centos 7.6.1810 with kernel 3.10.0-957.el7.x86_64, I ran into this problem too.

with -async nfs exports, all file copied result in 0 byte size Object.

with -sync nfs export, file smaller than 512K is consistent, but file logger than 512K result in 0 byte size Object.

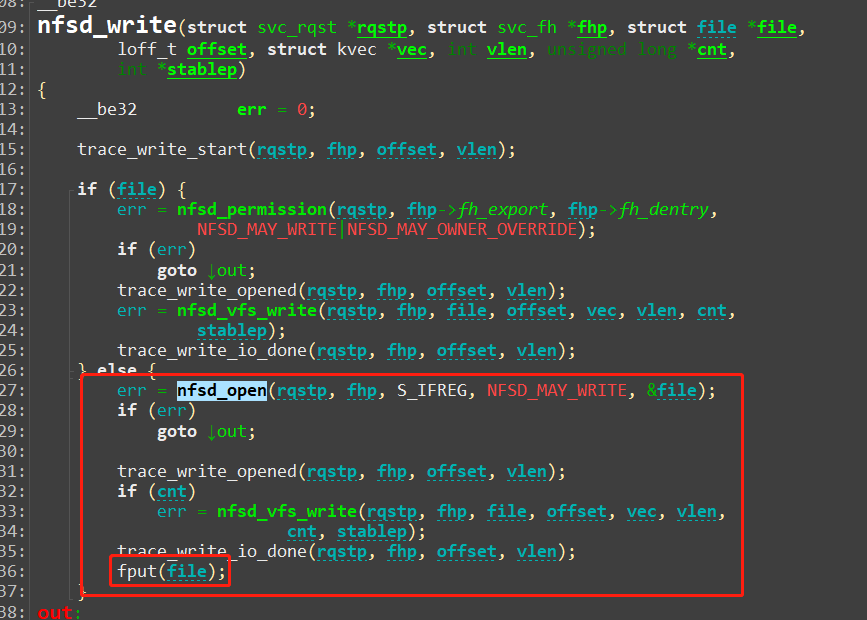

I think this problem caused by nfsd_write, it open the file, do the write,and call fput, without flush. s3fs will lose data in cache in this case.

when I copy a 7byte file from nfs client, s3fs print the operaion sequences below:

unique: 11, opcode: MKNOD (8), nodeid: 1, insize: 62, pid: 10126

create flags: 0xc1 /test1 0100000 umask=0000

create[7] flags: 0xc1 /test1

getattr /test1

NODEID: 3

release[7] flags: 0xc1

unique: 11, success, outsize: 144

unique: 12, opcode: SETATTR (4), nodeid: 3, insize: 128, pid: 10126

utimens /test1 1113260032.000000000 728876565.000000000

getattr /test1

unique: 12, success, outsize: 120

unique: 13, opcode: REMOVEXATTR (24), nodeid: 3, insize: 53, pid: 10126

unique: 13, error: -38 (Function not implemented), outsize: 16

unique: 14, opcode: GETATTR (3), nodeid: 1, insize: 56, pid: 10126

getattr /

unique: 14, success, outsize: 120

unique: 15, opcode: SETATTR (4), nodeid: 3, insize: 128, pid: 10126

utimens /test1 1628651530.351042974 1628651530.351042974

getattr /test1

unique: 15, success, outsize: 120

unique: 16, opcode: GETXATTR (22), nodeid: 1, insize: 73, pid: 10126

unique: 16, error: -38 (Function not implemented), outsize: 16

unique: 17, opcode: OPEN (14), nodeid: 3, insize: 48, pid: 10126

open flags: 0x8001 /test1

open[7] flags: 0x8001 /test1

unique: 17, success, outsize: 32

unique: 18, opcode: WRITE (16), nodeid: 3, insize: 87, pid: 10126

write[7] 7 bytes to 0 flags: 0x8001

write[7] 7 bytes to 0

unique: 18, success, outsize: 24

unique: 19, opcode: GETATTR (3), nodeid: 3, insize: 56, pid: 10126

getattr /test1

unique: 20, opcode: RELEASE (18), nodeid: 3, insize: 64, pid: 0

release[7] flags: 0x8001

unique: 20, success, outsize: 16

unique: 19, success, outsize: 120

unique: 21, opcode: GETATTR (3), nodeid: 1, insize: 56, pid: 10125

getattr /

unique: 21, success, outsize: 120

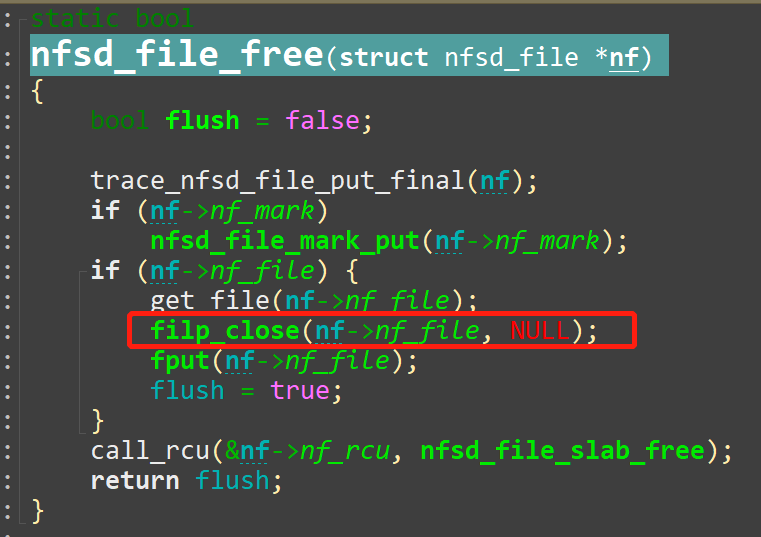

nfsd introduced filecache for nfsv3 since 5.3-rc2, I update my kernel to elrepo's kernel-lt(5.4.135), the problem disappeared.I think the reason is filecache save the file handle, only when the handle is not used after 2s, the handle will be closed, using filp_close which will call f_op->flush.

So, is there any solution to fix the data loss with 3.10 knfsd?

@shuangzai21 commented on GitHub (Aug 17, 2021):

thank you. The client version I used is centos 3.10.0-1160.31.1.el7.x86_64. In addition, I think the data may have been lost before s3fs_write accepted the data. Do you think it is the nfS3 code problem?

@VVoidV commented on GitHub (Aug 17, 2021):

I mean, what is your NFS server (nfsd) kernel version,? I tested with 5.3.135 kernel and s3fs version 1.89, data is consist.

In my case, s3fs_write do receive the data.

The problem is s3fs_flush is not called by nfsd_write, so the data remain in cache of s3fs, when s3fs_release called by fput, cache file will be closed without upload.

Maybe you could use s3fs -o debug,dbglevel=debug,logfile=$LOGFILE,$OTHEROPTION to mount your bucket, you will see the op sequence, and more infomation in $LOGFILE.

@gaul commented on GitHub (Aug 17, 2021):

I don't understand the NFS problem -- if an application writes data to internal buffers without flushing to the kernel then the eventual

closeshould automatically flush?@shuangzai21 commented on GitHub (Aug 17, 2021):

nfsstat --version

Nfsstat: 1.3.0

the file is successfully uploaded through NFS4, but nfS3 will fail

@VVoidV commented on GitHub (Aug 17, 2021):

nfsd running in kernel space, NFSv3 process every write request in this process, it open the file, write data, then call fput, fput will call f_op->release, without flush. in 5.4 kernel nfsd, file closed by filp_close which will call f_op->flush then call fput().

by the way, I tested with xfs with nfsd, the data do consist.

@shuangzai21 commented on GitHub (Aug 26, 2021):

Sincerely ask, have you solved the problem of nfs3 uploading files to S3FS bucket without kernel upgrade (in kernel 3.10.0 version) ?

@shuangzai21 commented on GitHub (Sep 2, 2021):

@gaul can you help me solve this problem ?

sincerely ask

@CarstenGrohmann commented on GitHub (Oct 7, 2021):

NFS uses close-to-open consistency. This function ensures that all data has been transferred to and confirmed by the NFS server when the application has closed the file.

The behaviour of NFS clients before "close()" is undefined. The local operating system can and will cache data. Therefore, you can only perform an end-to-end check after the application has closed the file.

I suggest to test your two layers (application to NFSD and NFSD to S3) separately.

@zulaysalas commented on GitHub (Jul 7, 2023):

Hi @shuangzai21, were you able to resolve the issue with s3fs-fuse on the nfs client? In my case, when the nfs client executes the cp, mv, touch or rsync command, it returns an error. was anyone able to solve this problem?