mirror of

https://github.com/s3fs-fuse/s3fs-fuse.git

synced 2026-04-25 21:35:58 +03:00

[GH-ISSUE #1402] Timestamp not updated on the s3fs mount point of same file ( without content change) #744

Labels

No labels

bug

bug

dataloss

duplicate

enhancement

feature request

help wanted

invalid

need info

performance

pull-request

question

question

testing

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/s3fs-fuse#744

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @kddon on GitHub (Sep 15, 2020).

Original GitHub issue: https://github.com/s3fs-fuse/s3fs-fuse/issues/1402

Additional Information

The following information is very important in order to help us to help you. Omission of the following details may delay your support request or receive no attention at all.

Keep in mind that the commands we provide to retrieve information are oriented to GNU/Linux Distributions, so you could need to use others if you use s3fs on macOS or BSD

Version of s3fs being used (s3fs --version)

example: 1.00

V1.87

Version of fuse being used (pkg-config --modversion fuse, rpm -qi fuse, dpkg -s fuse)

example: 2.9.4

2.9.2

Kernel information (uname -r)

command result: uname -r

4.14.192-147.314.amzn2.x86_64

GNU/Linux Distribution, if applicable (cat /etc/os-release)

command result: cat /etc/os-release

Amazon Linux

s3fs command line used, if applicable

/etc/fstab entry, if applicable

s3fs syslog messages (grep s3fs /var/log/syslog, journalctl | grep s3fs, or s3fs outputs)

if you execute s3fs with dbglevel, curldbg option, you can get detail debug messages

Details about issue

We are using s3fs to mount the s3 bucket on a local drive. When we upload the same file( without content change) on the s3 bucket the timestamp of a file is changed but that file timestamp not changed in the s3fs mount point its show the old timestamp.

If we update the content of file and then upload it to the s3 bucket the timestamp changes on s3 bucket and s3fs mount point as well but not for the file which has same content.

Please kindly update.

@gaul commented on GitHub (Sep 15, 2020):

integration-test-main.shhastest_update_timewhich should exercise this. Can you look at this and provide a complete test that demonstrates your symptoms?@kddon commented on GitHub (Sep 15, 2020):

how can i execute this integration-test-main.sh? is there any parameter required

@gaul commented on GitHub (Sep 15, 2020):

You can run the tests if you check out the master branch from GitHub then run

makeandmake check -C test. (You can find this information in.travis.yml)@kddon commented on GitHub (Sep 16, 2020):

Hi, I have execute make check -C test and getting below error.

make: Entering directory

/root/s3fs-fuse/test' make check-TESTS make[1]: Entering directory/root/s3fs-fuse/test'make[2]: Entering directory

/root/s3fs-fuse/test' FAIL: small-integration-test.sh make[3]: Entering directory/root/s3fs-fuse/test'make[3]: Nothing to be done for

all'. make[3]: Leaving directory/root/s3fs-fuse/test'Testsuite summary for s3fs 1.87

TOTAL: 1

PASS: 0

SKIP: 0

XFAIL: 0

FAIL: 1

XPASS: 0

ERROR: 0

============================================================================

See test/test-suite.log

make[2]: *** [test-suite.log] Error 1

make[2]: Leaving directory

/root/s3fs-fuse/test' make[1]: *** [check-TESTS] Error 2 make[1]: Leaving directory/root/s3fs-fuse/test'make: *** [check-am] Error 2

make: Leaving directory `/root/s3fs-fuse/test'

testsuit.log.

s3fs 1.87: test/test-suite.log

TOTAL: 1

PASS: 0

SKIP: 0

XFAIL: 0

FAIL: 1

XPASS: 0

ERROR: 0

.. contents:: :depth: 2

FAIL: small-integration-test.sh

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

stdbuf: failed to run command ‘java’: No such file or directory

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

integration-test-common.sh: connect: Connection refused

integration-test-common.sh: line 144: /dev/tcp/127.0.0.1/8080: Connection refused

testing s3fs flag: use_cache=/tmp/s3fs-cache -o ensure_diskfree=796

Trying: grep -q s3fs-integration-test /proc/mounts

s3fs: [CRT] sighandlers.cpp:SetLogLevel(154): change debug level from [CRT] to [INF]

s3fs: [INF] s3fs.cpp:set_mountpoint_attribute(3930): PROC(uid=0, gid=0) - MountPoint(uid=0, gid=0, mode=40750)

s3fs: [INF] curl.cpp:InitMimeType(422): Loaded mime information from /etc/mime.types

s3fs: [INF] s3fs.cpp:s3fs_init(3242): init v1.87(commit:c58c91f) with OpenSSL

s3fs: [INF] s3fs.cpp:do_create_bucket(854): /

s3fs: [INF] curl.cpp:PutRequest(3022): [tpath=/]

s3fs: [INF] curl.cpp:PutRequest(3040): create zero byte file object.

s3fs: [INF] curl_util.cpp:prepare_url(243): URL is https://127.0.0.1:8080/s3fs-integration-test/

s3fs: [INF] curl_util.cpp:prepare_url(276): URL changed is https://127.0.0.1:8080/s3fs-integration-test/

s3fs: [INF] curl.cpp:PutRequest(3117): uploading... [path=/][fd=-1][size=0]

s3fs: [INF] curl.cpp:insertV4Headers(2556): computing signature [PUT] [/] [] []

s3fs: [INF] curl_util.cpp:url_to_host(320): url is https://127.0.0.1:8080

s3fs: [ERR] curl.cpp:RequestPerform(2296): ### CURLE_COULDNT_CONNECT

Retrying: grep -q s3fs-integration-test /proc/mounts

Trying: grep -q s3fs-integration-test /proc/mounts

s3fs: [INF] curl.cpp:RequestPerform(2395): ### retrying...

s3fs: [INF] curl.cpp:RemakeHandle(2018): Retry request. [type=3][url=https://127.0.0.1:8080/s3fs-integration-test/][path=/]

s3fs: [ERR] curl.cpp:RequestPerform(2296): ### CURLE_COULDNT_CONNECT

s3fs: [INF] curl.cpp:RequestPerform(2395): ### retrying...

s3fs: [INF] curl.cpp:RemakeHandle(2018): Retry request. [type=3][url=https://127.0.0.1:8080/s3fs-integration-test/][path=/]

s3fs: [ERR] curl.cpp:RequestPerform(2296): ### CURLE_COULDNT_CONNECT

s3fs: [INF] curl.cpp:RequestPerform(2395): ### retrying...

s3fs: [INF] curl.cpp:RemakeHandle(2018): Retry request. [type=3][url=https://127.0.0.1:8080/s3fs-integration-test/][path=/]

s3fs: [ERR] curl.cpp:RequestPerform(2413): ### giving up

s3fs: [INF] curl_handlerpool.cpp:ReturnHandler(110): Pool full: destroy the oldest handler

s3fs: [ERR] s3fs.cpp:s3fs_exit_fuseloop(3232): Exiting FUSE event loop due to errors

s3fs:

s3fs: [INF] s3fs.cpp:s3fs_destroy(3300): destroy

mkdir: cannot create directory ‘s3fs-integration-test’: Software caused connection abort

integration-test-common.sh: line 158: kill: (5693) - No such process

please look into this.

@gaul commented on GitHub (Sep 16, 2020):

Your log lines contain:

You need to install java. Please do a minimal amount of investigation on your own!

@kddon commented on GitHub (Sep 17, 2020):

Hello,

I have install java and getting error.

make: Entering directory

/root/s3fs-fuse/test' make check-TESTS make[1]: Entering directory/root/s3fs-fuse/test'make[2]: Entering directory

/root/s3fs-fuse/test' FAIL: small-integration-test.sh make[3]: Entering directory/root/s3fs-fuse/test'make[3]: Nothing to be done for

all'. make[3]: Leaving directory/root/s3fs-fuse/test'Testsuite summary for s3fs 1.87

TOTAL: 1

PASS: 0

SKIP: 0

XFAIL: 0

FAIL: 1

XPASS: 0

ERROR: 0

============================================================================

See test/test-suite.log

make[2]: *** [test-suite.log] Error 1

make[2]: Leaving directory

/root/s3fs-fuse/test' make[1]: *** [check-TESTS] Error 2 make[1]: Leaving directory/root/s3fs-fuse/test'make: *** [check-am] Error 2

make: Leaving directory `/root/s3fs-fuse/test'

please look attach log file and let us know

test-suite.log

@gaul commented on GitHub (Sep 17, 2020):

test_cache_file_statfails for an unrelated reason about command line flags and pids (probably another instance of s3fs running). But the time tests pass, so even if this worked you would still have your original problem. So can you share some way to reproduce the time problem you experienced?@kddon commented on GitHub (Sep 17, 2020):

Hi,

I have upload welcome.txt( same file without a content change in a file) on the s3 bucket which is mounted on local drive using s3fs. The file timestamp is updated on the s3 bucket but not updated on the local drive mount point this is the main problem we are facing. Please kindly look into the priority.

@kddon commented on GitHub (Sep 21, 2020):

Any update on this.?

@kddon commented on GitHub (Sep 21, 2020):

Still the same issue. Please kindly look at the priority.

@kddon commented on GitHub (Sep 30, 2020):

Hello Team,

Any update on this. Is there any fix for this issue?

@gaul commented on GitHub (Sep 30, 2020):

There is no update on this because you haven't described how to reproduce the problem and then closed the issue. Please provide a self-contained test case which demonstrates your issue.

@kddon commented on GitHub (Sep 30, 2020):

Hello Team,

Please find below the test case demonstration.

welcome.txt Sep30, 2020 7:03:03PM GMT +0530 47.0 B

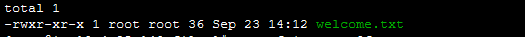

The timestamp of the welcome.txt file on the s3fs mount point on the server is below.

-rwxr-xr-x 1 root root 47 Sep 30 13:33 welcome.txt

welcome.txt Sep30, 2020 7:10:30PM GMT +0530 47.0 B

the timestamp of the welcome.txt file is not updated on the server.

-rwxr-xr-x 1 root root 47 Sep 30 13:33 welcome.txt

We are using the below command to mount the s3 bucket.

/usr/local/bin/s3fs -o use_cache=/tmp,iam_role="< iam role>",allow_other,umask=022

Please let us know in case of any concern in the above test case.

@kddon commented on GitHub (Oct 5, 2020):

Hello Team,

Any update on this?

@ggtakec commented on GitHub (Oct 5, 2020):

@kddon

We merged #1437 PR into the master branch.

This change adds atime, which was not previously handled by s3fs, and handles ctime/mtime correctly.

I copied(cp) the files other than the s3fs mount point, and then copied them again to see the results of each stat command.

I'm sure the code in this master branch is fine and the time is updated.

Please note that if you use another tool to create (overwrite) the file and upload it to S3, it will be affected by the s3fs stat cache.

@kddon commented on GitHub (Oct 5, 2020):

Let me check and will update you

@kddon commented on GitHub (Oct 5, 2020):

Hello Team,

Please find below.

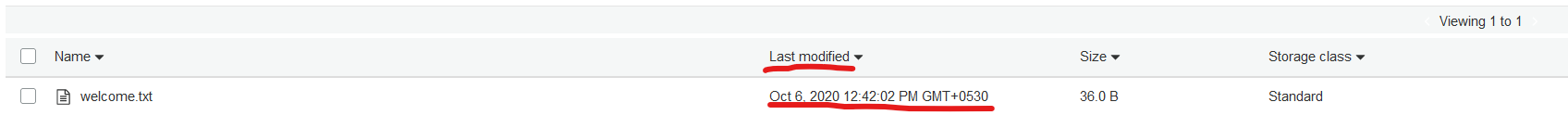

We have uploaded again the file welcome.txt on the s3 bucket below is the timestamp.

welcome.txt Oct 5,, 2020 8:36:00PM GMT +0530 47.0 B

The timestamp of the welcome.txt file on the s3fs mount point on the server is below.

Still timestamp not updated on s3fs mount point.

-rwxr-xr-x 1 root root 47 Sep 30 13:40 welcome.txt

I have checkout fresh code and compile, not used any tool to upload the file on the s3 bucket, upload files directly.

I uploaded the file on the s3 bucket and the date-time is updated on s3 console when I check on s3fs mount point it's still showing old date and time.

Please let me know.

@ggtakec commented on GitHub (Oct 5, 2020):

@kddon

I think this phenomenon is not being able to upload(copy) files to your S3 bucket.

First of all, I think you should use the AWS console etc. to check if the file object has been replaced.

It's unlikely to solve this problem, but if you allow, you may try clearing the cache file specified by s3fs once.

(The cache files are /tmp/, /tmp/..stat and /tmp/..mirror, given your boot options.)

@kddon commented on GitHub (Oct 5, 2020):

Hi @ggtakec ,

Just to confirm, we are placing the files in S3 from AWS console. The timestamp is updated in S3. We are expecting the files in Linux S3fs filesystem to have same timestamp as the files in S3. The issue is, in Linux S3fs it shows the old timestamp.

@ggtakec commented on GitHub (Oct 5, 2020):

@kddon Are you uploading the welcome.txt file from the AWS console to S3?

In this case, I think that only Last-Modified is added to the welcome.txt file.

Is this Last-Modified updated when I re-upload the file?

When uploading from the AWS console, s3fs uses Last-Modified instead because there is no header (x-amz-meta- *) used by s3fs.

That is, it depends on the value of Last-Modified.

If the welcome.txt file has Last-Modified or x-amz-meta- * and s3fs cannot display the value correctly, set the max_stat_cache_size option to 0 and start s3fs.

This prevents s3fs from caching the stat information in the file.

@kddon commented on GitHub (Oct 6, 2020):

Hi @ggtakec ,

Yes I'm uploading the welcome.txt from the AWS console to S3.

Yes The last-modified column is update in S3 when I upload.

but it does not update in s3fs filesystem.

I'll try to update the max_stat_cache_size option to 0 and start s3fs and give it a try. I'll let you know the result.

@kddon commented on GitHub (Oct 7, 2020):

This has worked.

Thank you so much for the help!