mirror of

https://github.com/s3fs-fuse/s3fs-fuse.git

synced 2026-04-25 13:26:00 +03:00

[GH-ISSUE #1346] The moved file length become zero when disk space is insufficient #721

Labels

No labels

bug

bug

dataloss

duplicate

enhancement

feature request

help wanted

invalid

need info

performance

pull-request

question

question

testing

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/s3fs-fuse#721

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @liuyongqing on GitHub (Jul 31, 2020).

Original GitHub issue: https://github.com/s3fs-fuse/s3fs-fuse/issues/1346

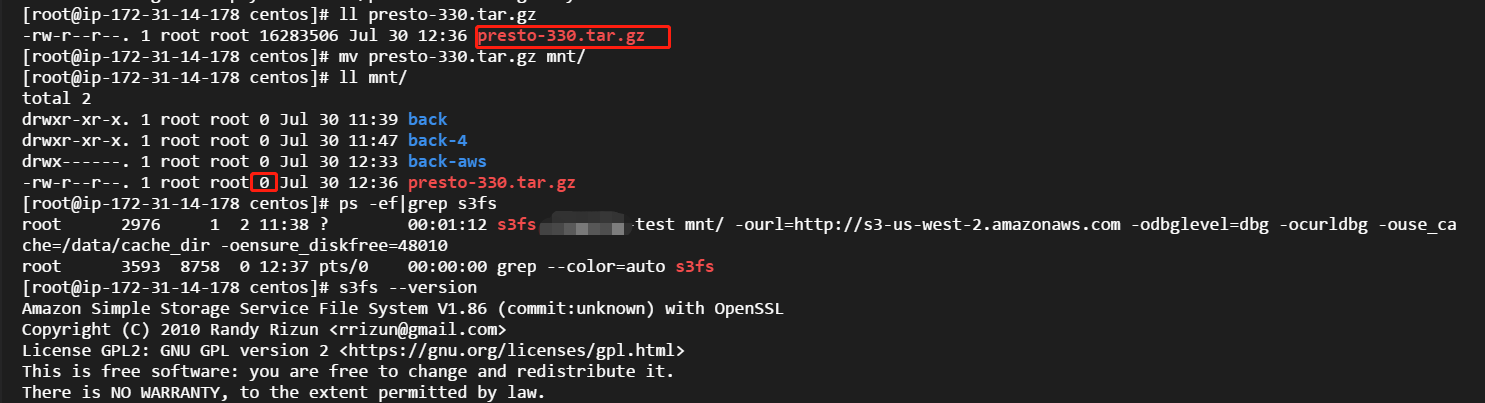

Version: S3FS 1.8.6

Os:CentOS

Description:

When the disk space is insufficient, we use mv command move one file to s3fs mount point, the final data exist in s3 is zero length object, we can use -oensure_diskfree to easily reproduce it

Analysis:

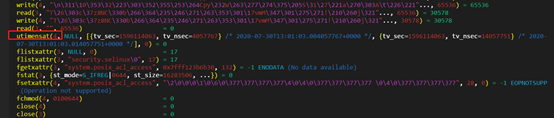

the following is what happened in disk space sufficient case:

according to above analysis, a non expect zero object exist in s3,in other case, the data is corrupt.

Solution:

@gaul commented on GitHub (Aug 16, 2020):

Please test with latest master and reopen is symptoms persist.