mirror of

https://github.com/hibiken/asynq.git

synced 2026-04-25 23:15:51 +03:00

[GH-ISSUE #612] [BUG] asynq: task lease expired ,What does it mean? #299

Labels

No labels

CLI

bug

designing

documentation

duplicate

enhancement

good first issue

good first issue

help wanted

idea

invalid

investigate

needs-more-info

performance

pr-welcome

pull-request

question

wontfix

work in progress

work in progress

work-around-available

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/asynq#299

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @XTeam-Wing on GitHub (Feb 11, 2023).

Original GitHub issue: https://github.com/hibiken/asynq/issues/612

Originally assigned to: @hibiken on GitHub.

What is the reason for this error? If the queue is wrong, no error information is seen.

@linhbkhn95 commented on GitHub (Feb 14, 2023):

hi @XTeam-Wing

I think your code or infra will catch some problems

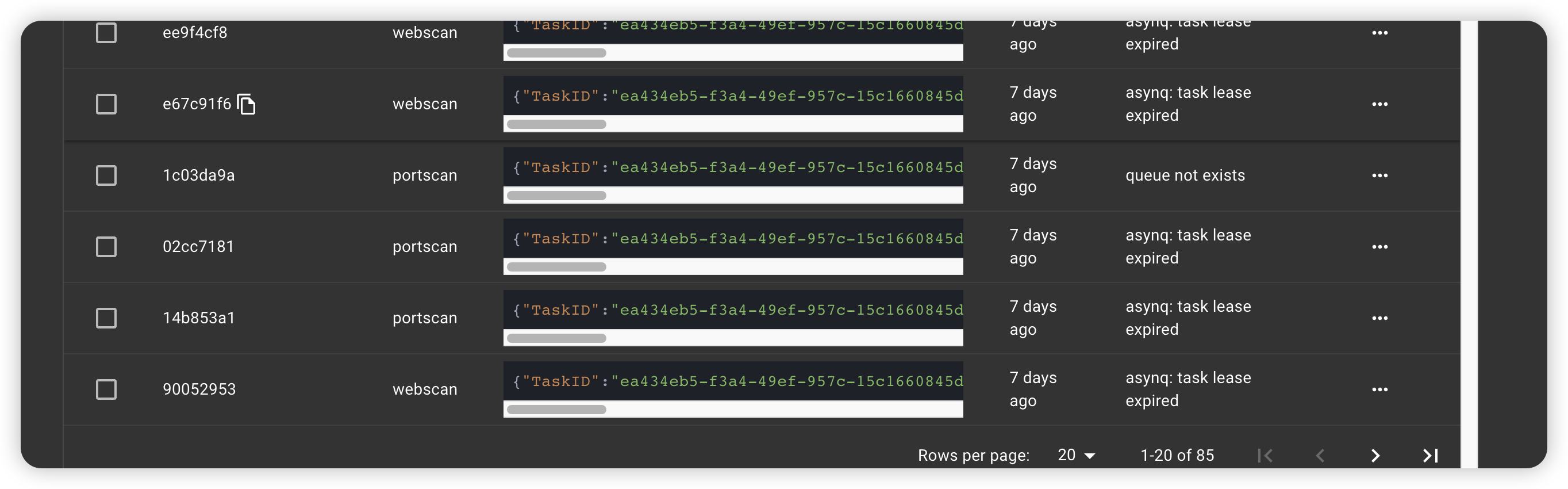

I see the queue does not exist error in the image above => no worker processed this task => expired time for processing.

Im not sure.

Feel free to provide your code here to have full overview context.

@XTeam-Wing commented on GitHub (Feb 24, 2023):

I think mux.HandleFunc should return a clear error. Users can locate where the error occurred, thank you for your reply.

@linhbkhn95 commented on GitHub (Feb 27, 2023):

Can you show the enqueued task? We should map the handler with task type correctly.

@XTeam-Wing commented on GitHub (Mar 8, 2023):

Hi, I got a more specific error

@XTeam-Wing commented on GitHub (May 22, 2023):

@linhbkhn95 hi,master.

i think the handler is np.

@Xenov-X commented on GitHub (Jan 12, 2024):

@XTeam-Wing - Did you find a solution to this?

@XTeam-Wing commented on GitHub (Jan 13, 2024):

not yet,but I made some changes and this feature doesn't affect me

@william9x commented on GitHub (Apr 3, 2024):

@XTeam-Wing I'm facing the same issues, do you mind sharing what changes did you make? Thank you!

@Xenov-X commented on GitHub (Apr 3, 2024):

Just to share what I found when doing further testing, which may help you resolve the issue.

My use case:

My issue seemed to arise from an issue when performing high network throughput tasks on EC2, which triggered some kind of rate limiting on outbound network traffic. The remote workers failed to reach the redis DB to perform their heartbeat for an extended period of time, which resulted in the server recording the tasks as failed with

task lease expired.I guess the point I'm trying to make, it the error is due to heartbeats not happening, therefore the task lease doesnt get extended.

The fix in my case, was to apply rate limiting to the task actions to ensure the AWS rate limit issue wasn't triggered.

I'd look at your network path from the worker to the redis port, and see if theres anything that could be stopping the heatbeat connection occurring (even intermittently)

@dmitrii-doronin commented on GitHub (Jan 14, 2026):

This issues is still relevant. Has anyone found a solution to this?

@armistcxy commented on GitHub (Jan 14, 2026):

I have encountered this situation at some points in staging environment when I suddenly kill the pod that runs the worker.

IMO the simplest solution is writing a background job or registering a scheduled task to peforming these "crash" tasks again. (You must be careful with the state of crash tasks and idempotency by the way)

The comment from Xenov also makes sense, as I have said if it is safe to retry then just retry the task