mirror of

https://github.com/hibiken/asynq.git

synced 2026-04-26 15:35:55 +03:00

[GH-ISSUE #314] [BUG] The high priority queue is process very slow compare to lower queue #1146

Labels

No labels

CLI

bug

designing

documentation

duplicate

enhancement

good first issue

good first issue

help wanted

idea

invalid

investigate

needs-more-info

performance

pr-welcome

pull-request

question

wontfix

work in progress

work in progress

work-around-available

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/asynq#1146

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @duyhungtnn on GitHub (Aug 20, 2021).

Original GitHub issue: https://github.com/hibiken/asynq/issues/314

Originally assigned to: @hibiken on GitHub.

Describe the bug

The high priority queue is processed very slow compare to the lower queue

To Reproduce

Steps to reproduce the behavior (Code snippets if applicable):

defaultqueue. Many small but critical tasks will issue from here and added to thecriticalqueuedefaultqueue is hold all the time of worker poolscriticalqueue is stuck, and no task is processExpected behavior

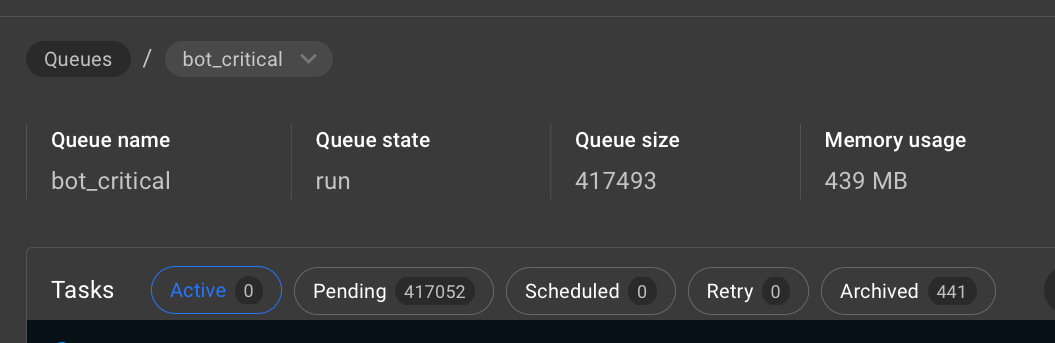

criticalqueue's task need to be selected to process first and clean out quicklyScreenshots

Setup server with 3 queue

Enqueue critical task like that

The

criticalqueue stuckThe

defaultqueue is running on all other workersEnvironment (please complete the following information):

asynqpackage0.18.3@crossworth commented on GitHub (Aug 20, 2021):

Hello @duyhungtnn, could you tell me what value are you passing in the Concurrency (ccu variable)?

@duyhungtnn commented on GitHub (Aug 20, 2021):

Hi @crossworth , the Concurrency value is 6.

I have two asynq server (Concurrency value is 6 per server)

@duyhungtnn commented on GitHub (Aug 20, 2021):

@crossworth commented on GitHub (Aug 20, 2021):

Looks like you need more workers. There are 12 active tasks on the default queue, if they are long running tasks and you have 12 total workers, you are out of workers.

You could try using strict queues but if the process is a long running one I dont think it will make a difference, what you need is some kind of queue reservation, maybe start a server with only the default queue with 2 ou 4 workers and use the rest for the fast running tasks.

@duyhungtnn commented on GitHub (Aug 20, 2021):

thank you for your suggestion. I tried it before and it works.

I just want to follow the document about weighted priority

But in the document when don't use strict queues, and use the default weighted priority here are whats I expected

It doesn't happen. After 1 hour of waiting but no

criticaltask is processed. I choose to pause thedefaultqueue for a second. And everything comes back to normal.@crossworth commented on GitHub (Aug 20, 2021):

This occurs because you have long running tasks and all the workers are blocked.

If each task takes the same amount of time, the weighted priority would be exact as describe, but when you introduce long running tasks and don't have any free worker you must wait the tasks complete, if they are long running it can take awhile.

When you paused the queue, you had enough workers to process the critical tasks.

You could solve this by limiting the number of long running tasks, making them faster or using multiples servers.

Maybe we should implement more

QueueModeslike:Where the

WeightedReservedwould reserve the workers for each queue without using them (on others queues) when idle, this would keep a lot of workers waiting most of the time, but it could be a good solution for your case and maybe others.@duyhungtnn commented on GitHub (Aug 20, 2021):

@crossworth That is a good idea 👍

In my case right after a long-running task finish, (It usually run in 10 minutes)

we actually have a free worker for a short time and it has so many

criticaltasks out there. It just could not pick somecriticaltasks but stick with low priority tasks.@hibiken commented on GitHub (Aug 21, 2021):

@duyhungtnn thank you for raising this issue! It's something we should consider adding support for or at least update the documentation to make this clearer.

@crossworth's explanation is spot-on. Thank you for jumping in quickly to provide explanations!

For more context, the current implementation picks which queue to pull tasks from by calling this

queues()function:github.com/hibiken/asynq@421dc584ff/processor.go (L323)If the

StrictPriorityfield is set, then it'll always query queues in the priority descending order (i.e. higher priority queue first). So in the scenario you described, if you set theStrictPriorityfield all your workers should process tasks from critical queue if there's any tasks in the queue.If the

StrictPriorityfield is not set, then thequeuesfunction will use queue priorities to generate a list of queues, each queue name happening the number of priority.For example, with the below queue configuration:

it will generate a list

and it shuffles it and dedup the list to get the list we use to query the queues.

So that's how you end up with the chance of 60%, 30%, 10% described in the godoc.

Until we implement something to reserve workers for each queue, potential solutions are to

StrictPriorityORLet me know if you have any questions! We'll consider adding support for reserving workers for each queue.

@duyhungtnn commented on GitHub (Aug 22, 2021):

@hibiken I think I have a little misunderstanding in the documentation.

After a day of running, the chances of any queue are true as shown in the Docs. I think it is good.

I fixed this problem by setting up more servers, making sure the

criticaltask is always handled first.StrictPriorityON.So I think

reserving workerscould be an ideal feature.@hibiken commented on GitHub (Aug 23, 2021):

@duyhungtnn Glad it worked out. These are great bug-reports/feature-requests. Thank you for reporting & keep 'em coming!