mirror of

https://github.com/007revad/Synology_HDD_db.git

synced 2026-04-25 13:45:59 +03:00

[GH-ISSUE #11] Not working for rs3621xs+ on DSM 6.2.4-25556 #12

Labels

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/Synology_HDD_db#12

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @nightmadeoflight on GitHub (Mar 17, 2023).

Original GitHub issue: https://github.com/007revad/Synology_HDD_db/issues/11

Drives added (and now showing as "already existing"):

WD4002FFWX-68TZ4N0 already exists in rs3621xs+_host.db

WD4002FFWX-68TZ4N0 already exists in rs3621xs+_host.db.new

WD40EFRX-68WT0N0 already exists in rs3621xs+_host.db

WD40EFRX-68WT0N0 already exists in rs3621xs+_host.db.new

Before the reboot., I get notifications on the Synology web interface to say they are unverified (each time you run the script).

After a reboot, they are still showing unverified.

@007revad commented on GitHub (Mar 18, 2023):

Can you please attach get a copy of your rs3621xs+_host.db file:

sudo cp /var.defaults/lib/disk-compatibility/rs3621xs+_host.db $HOME/rs3621xs+_host.db.txtThen reply here and attach the rs3621xs+_host.db.txt file.

If you don't have the home folder service enabled change $HOME in the command above to a path you have access to.

@007revad commented on GitHub (Mar 22, 2023):

OP not responding

@nightmadeoflight commented on GitHub (Mar 23, 2023):

I upgraded DSM to the latest version 7 (from 6), ran the script and it

worked. :)

On Wed, Mar 22, 2023 at 10:38 PM 007revad @.***> wrote:

@Greg-ATG commented on GitHub (Apr 13, 2023):

The issue here may be that some NAS models running DSM6 still reference the "X_v7.db" files. Actually, now that I think about it, our NAS (RS2421RP+) shipped with DSM7, but we completed a downgrade procedure to DSM6. Perhaps this is why it's referencing the v7 databases.

This would explain why @nightmadeoflight upgrading their NAS to DSM7 fixed the issue, since then this script would have started fixing the v7 database files.

Perhaps it's safest to modify the v7 database files (if present) regardless of the DSM version?

@007revad commented on GitHub (Apr 17, 2023):

@Greg-ATG

I've only seen X.db files in DSM 6 and X_v7.db files in DSM 7... but there could be situations where someone's Synology has both. I know when you upgrade from DSM 6 to DSM 7 it appends _v7 to all the .db file names.

Since you have downgraded from DSM 7 to DSM 6 I'm curious if your RS2421RP+ now has both X.db and X_v7.db files? Can you run the following command to see what it returns:

ls /var/lib/disk-compatibility/*.db@Greg-ATG commented on GitHub (Apr 17, 2023):

Yes, the disk-compatibility directory contains both X.db and X_v7.db files for us. This must be related to the DSM6 downgrade.

@Greg-ATG commented on GitHub (Apr 19, 2023):

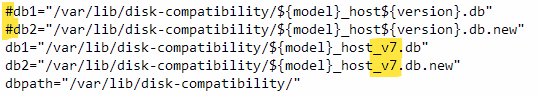

With the "removable disk" issue fixed in the latest version (2.1.38), I've confirmed that running the script on our RS2421RP+ w/ DSM6 does NOT fix the "unverified drives" warning in DSM. However, by hardcoding "_v7" into the script as shown below, running it DOES fix the warnings.

Would it be harmful to have the script look for ANY "${model}_host.db(.new)" OR "${model}_host_v7.db(.new)" files and patch all of them, regardless of DSM version installed?

@007revad commented on GitHub (Apr 21, 2023):

@Greg-ATG

I'm working on changing the script to ignore the DSM version and update all the host db files it finds. This will avoid the issue you had, and cater for any changes by new DSM versions in future. As it requires quite a few changes it'll take a couple of days to finish and test.

I'd like to get to you to test it when I'm finished.

@Greg-ATG commented on GitHub (Apr 21, 2023):

Sounds good, no rush. I'll help out however I can.

@007revad commented on GitHub (Apr 23, 2023):

@Greg-ATG

I've got a new pre-release version that should solve the issue of DSM 6 using X_v7.db files after downgrading from DSM 7 to DSM 6. https://github.com/007revad/Synology_HDD_db/releases/tag/v2.2.40

It also has a new --restore option to undo all changes previously made by the script so you can test v2.2.40 with clean db files and synoinfo.conf file.

Can you please:

sudo syno_hdd_db --restoreIt works perfectly in my test environment where I simulate having two expansion units and an M.2 card. But as I don't actually have an expansion unit or M.2 card I wouldn't be surprised if there's a bug that I didn't find.

@007revad commented on GitHub (Apr 23, 2023):

@Greg-ATG

Sorry, that pre-release version was mistakenly the same v2.1.38 !

Try the new https://github.com/007revad/Synology_HDD_db/releases/tag/v2.2.42

@Greg-ATG commented on GitHub (Apr 24, 2023):

I ran the script with restore mode (nice feature!) with the following output:

However, the NAS reported NO unverified drives after a reboot. Upon closer inspection, I found the following:

I'm not sure if these inconsistencies are bugs with the restore mode or (more likely) a result of the tinkering I had previously done with these files.

I manually cleaned up all of these files and rebooted the NAS, which resulted in the expected unverified disks warning. I then ran the script with my preferred parameters (-showedits -noupdate -m2) and I'm happy to report that there was no unverified disk warning after rebooting and all the DB files look correct (it was at this point that I realized that this script adds the modifications to v7 files at the end, hence my note up above about v7.db.new, and I manually cleaned up the v7.db.new.bak file).

In the interest of science, I ran the script in restore mode again and can confirm that everything was restored correctly. I than ran and rebooted twice using my preferred parameters. After the first execution, everything looked good, but after the second one, it appears duplicate entries were added to the regular (v6) DB files:

This is confirmed by the script log (for the second execution), which shows that the v6 files were "re-patched" while the v7 files were correctly detected as "already exists":

FYI, the v7 DB files look fine after the second execution. Also, we don't have any expansion units or M.2 cards in our system.

@007revad commented on GitHub (Apr 24, 2023):

Thank you for all your testing.

I chose the easy way to restore the files by restoring them from their backups. I did realise that there was a risk of restoring an edited file if the .db file was edited before it was backed up. I'll work out how to make DSM download clean db files.

For the duplicating entries in the v6 db files I've noticed the script is also creating an extra file named rs2421rp+_host.dbr with an "r" on the end. I haven't figured out what is causing this yet.

I've found the cause of the duplicated entries in the v6 db files which has existed since v1.3.32 but nobody (including me) noticed until now. It will be an easy fix.

@007revad commented on GitHub (Apr 24, 2023):

@Greg-ATG

I've fixed the multiplying entries in the v6 db files issue.

I've also fixed the v6 .db files being copied with a .dbr extension issue (which has existed since v1.1.10).

https://github.com/007revad/Synology_HDD_db/releases/tag/v2.2.43

@Greg-ATG commented on GitHub (Apr 25, 2023):

Everything seems to work great! No redundant entries and the .dbr/.newr files don't reappear anymore.

It would be pretty cool if you can pull clean DB copies from Synology when using "--restore"! If that doesn't work out, maybe it makes sense to create two types of backups:

Where the "origbak" is created the first time the script runs (i.e. if "origbak" doesn't already exist) whereas any subsequent times the script runs and needs to make a change, it creates/overwrites "lastbak" so as not to overwrite the original backup. I suppose this would require two different restore modes (restore vs restorelast).

Anyway, I appreciate all your work in getting these issues resolved!

@007revad commented on GitHub (Apr 26, 2023):

Funny you should mention that. I added that to the develop branch, 3 hours before you commented :)

I just need to change one of the images in the readme to show the --restore option then I'll push it to the main branch and create a new release. Though I should probably edit the script to also delete any .dbr and .newr files.

@007revad commented on GitHub (Apr 26, 2023):

I forgot to mention that v2.2.44 is now available. https://github.com/007revad/Synology_HDD_db/releases/tag/v2.2.44

@tmnext commented on GitHub (Apr 27, 2023):

I am having the same problem on DS1823xs+ on DSM7.2 RC1; HDDs work, but firmware warning. The --restore in v2.44 leads to:

rm: cannot remove '/var/lib/disk-compatibility/*dbr': No such file or directory

rm: cannot remove '/var/lib/disk-compatibility/*db.newr': No such file or directory

@007revad commented on GitHub (Apr 27, 2023):

Those messages are harmless, but I have now prevented them from appearing in v2.2.45

Is the first time you've used the script?

Does it find your drives?

Can you run the script with -s or --show then copy and paste the output from the script here, or paste a screenshot.

@tmnext commented on GitHub (Apr 27, 2023):

Yes first time, the system is new. The 2.45 does run successfully, here is the output from one of the drives:

WD221KFGX-68B9KN0": {

"0A83": {

"compatibility_interval": [

{

"compatibility": "support",

"not_yet_rolling_status": "support",

"fw_dsm_update_status_notify": false,

"barebone_installable": true

}

All good, with the exception that the firmware warning does not go away (interestingly, it does not appear for the Samsung 970 M.2 drive, which runs with your enable_m2 script

@tmnext commented on GitHub (Apr 27, 2023):

More output:

Synology_HDD_db v2.2.45

DS1823xs+ DSM 7.2-64551 RC

Using options: -n

HDD/SSD models found: 3

SSD 860 EVO 4TB,2B6Q

WD221KFGX-68B9KN0,0A83

WUH721414ALE6L4,W240

M.2 drive models found: 2

Samsung SSD 960 PRO 2TB,ccc

Samsung SSD 970 EVO Plus 2TB,ccc

No M.2 cards found

No Expansion Units found

SSD 860 EVO 4TB already exists in ds1823xs+_host_v7.db

SSD 860 EVO 4TB already exists in ds1823xs+_host_v7.db.new

WD221KFGX-68B9KN0 already exists in ds1823xs+_host_v7.db

WD221KFGX-68B9KN0 already exists in ds1823xs+_host_v7.db.new

WUH721414ALE6L4 already exists in ds1823xs+_host_v7.db

WUH721414ALE6L4 already exists in ds1823xs+_host_v7.db.new

Samsung SSD 960 PRO 2TB already exists in ds1823xs+_host_v7.db

Samsung SSD 960 PRO 2TB already exists in ds1823xs+_host_v7.db.new

Samsung SSD 970 EVO Plus 2TB already exists in ds1823xs+_host_v7.db

Samsung SSD 970 EVO Plus 2TB already exists in ds1823xs+_host_v7.db.new

Support disk compatibility already enabled.

Support memory compatibility already enabled.

M.2 volume support already enabled.

Disabled drive db auto updates.

DSM successfully checked disk compatibility.

@007revad commented on GitHub (Apr 27, 2023):

If you've upgraded the memory with non-Synology memory you should include the

-roption when running the script, to prevent these annoying notifications.Try the

-foption. So you'd want to use-nfrThe -f option should get rid of the firmware warnings until I can work out what 7.2 RC has changed since 7.2 beta.

@tmnext commented on GitHub (Apr 27, 2023):

Thanks for your prompt reply and the awesome work here :) I just tried -nfr with v2.2.45, but there is no change in output and warnings are still the same. No rush, I'll just wait till you get around to it. Thanks again.

@tmnext commented on GitHub (Apr 27, 2023):

After restart (DSM7.2 RC1), the storage manager is not visible anymore. Not sure if thats related to 7.2 RC1 or the script :)

@007revad commented on GitHub (Apr 27, 2023):

Had you run my Synology_enable_M2_volume or Synology_enable_Deduplication scripts? They caused a couple of minor issues in 7.2-64213 Beta - which Synology fixed in 7.2-64216 Beta.

Otherwise it sounds like a 7.2 RC1 bug.

You can run

syno_hdd_db.sh --restoreto undo the changes made by syno_hdd_db then check if storage manager is back.@tmnext commented on GitHub (Apr 28, 2023):

I repaired it by taking out all disks, adding a fresh one in empty slot one and installing DSM7.2RC1, re-inserting the old disks same slots while the system is running, and they popped up as "online assembly". All good - procedure might be useful for others.

This is now slightly off-topic, but I am running your hdd_db and enable_m2 scripts. Why do I need the enable_dedupe as well?

@007revad commented on GitHub (Apr 28, 2023):

You don't need to run enable_dedupe as well. I was just wondering if you had used either enable_m2_volume OR enable_dedupe before storage manager went missing.

Are you still getting the firmware warning? And have you rebooted yet to see if the problem returns?

@tmnext commented on GitHub (Apr 28, 2023):

<Are you still getting the firmware warning? And have you rebooted yet to see if the problem returns?>

After restart, system normal, but still showing firmware warnings for all non-M2 drives.

@007revad commented on GitHub (Apr 28, 2023):

Someone with a DS918+ and 7.2 RC has also reported that lost access to Storage Manager, Snapshot Replication app, and most of the Hardware tab in control panel (fan speed, beep setting, etc). Also in Control Panel/Info Center "Model name" is listed as "Unknown Model" (instead of DS918+) and "Fan Speed Mode" is blank. It sounds like it may be because DSM doesn't know what the model is any more.

This tells me that the issue affects not just business models or recent models but probably all models.

I'm going to check all the Synology forums to see if it is a known RC bug. If it's not then I'll switch off my DS1821+, pull my HDDs, install a spare drive then run my scripts, one at a time, to see if I can reproduce the issue.

@007revad commented on GitHub (Apr 29, 2023):

When you wrote "and installing DSM 7.2 RC1" did you mean you created a storage pool on that drive so it had a clean copy of DSM 7.2 RC1 on it's system partition, or that you were able to install the DSM 7.2 RC1 .pat file again?

@tmnext commented on GitHub (Apr 29, 2023):

To clarify: This was exactly what had happened to mine (didnt want to overload github here with off-topic things), model unknown, storage manager gone, half the tabs gone; system felt still snappy fast, all data intact, almost as if there was a "rescue" mini-DSM underneath.

As for your question: Pulled out all drives with system off, added a fresh one (never used before), let web-assistant install by uploading DSM7.2RC1 pat file via web-assistant, but did NOT create a new pool or volume when the system was up. Gave it the same name and same account name. Then during system being up, I inserted all my disk in the same slots (that only worked cause I didn't populate all slots). At that time the system offered all my three old pools back as "online assembly possible". (I also tested turning system back off before old hdd insert and then inserted old drives, but it seemed the system would then choose randomly a DSM partition from an old drive and same problem occurred, so that did not work; only old hdd inserting when system is running via fresh hdd worked).

To top it off, I also used "full volume encryption" when I created the volumes the first time around, and during online assembly I was nicely asked to re-create the key vault with my old password, and system offered to upload the keys for each volume via my laptop, all that without having a working volume yet. Only then I re-run your hdd_db script 2.45 and the enable m2_volume script; re-started several times to make sure and it seems to work fine since then. Maybe it had to do with me coming from beta to RC1 the first time around (not the beta 64123, one after that, I think it ended in 6), or that I used the -f function which I didnt the second time. Since the system is new, pretty sure there isnt anything else I did with the system, and no other scripts are being used. I also used the automatic update function of hdd_db, original script was a few days older.

@007revad commented on GitHub (Apr 30, 2023):

I've noticed that the .db files in 7.2 RC include "smart_test_ignore" and "smart_attr_ignore" for all drives. In 7.2 beta and 7.1 it was only Synology drives that had those.

Synology drives have:

"smart_test_ignore": true,

"smart_attr_ignore": true

And in 7.2 RC 3rd party drives now have:

"smart_test_ignore": false,

"smart_attr_ignore": false

While manually adding your drives to a clean DS1832+ DSM 7.2 RC host db file (for you to test) I noticed that the firmware versions don't look correct.

Can you run the following commands and let me know what they return:

for d in /sys/block/sata*; do echo $(cat "$d/device/rev"); donecat /sys/block/nvme0n1/device/firmware_revcat /sys/block/nvme1n1/device/firmware_revIf the last 2 commands return "No such file or directory" then run these commands:

cat /sys/block/nvme0n1/device/revcat /sys/block/nvme1n1/device/rev@007revad commented on GitHub (May 5, 2023):

@70m7E not responding