mirror of

https://github.com/ArchiveBox/ArchiveBox.git

synced 2026-04-26 01:26:00 +03:00

[GH-ISSUE #642] Bug: Imports from older archives lead to ArchiveResult(output=None, status='skipped') entries polluting latest_outputs and showing 0 files in the UI #397

Labels

No labels

expected: maybe someday

expected: next release

expected: release after next

expected: unlikely unless contributed

good first ticket

help wanted

pull-request

scope: all users

scope: windows users

size: easy

size: hard

size: medium

size: medium

status: backlog

status: blocked

status: done

status: idea-phase

status: needs followup

status: wip

status: wontfix

touches: API/CLI/Spec

touches: configuration

touches: data/schema/architecture

touches: dependencies/packaging

touches: docs

touches: js

touches: views/replayers/html/css

why: correctness

why: functionality

why: performance

why: security

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference

starred/ArchiveBox#397

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @drpfenderson on GitHub (Feb 1, 2021).

Original GitHub issue: https://github.com/ArchiveBox/ArchiveBox/issues/642

Describe the bug

When viewing the webserver index of my archive, using

docker-compose up -d, certain entries show that they have 0 sources available for viewing. However, clicking on the entries show that there are multiple, sometimes all, sources.Steps to reproduce

0aea5ed3e8usingdocker-compose run archivebox init.docker-compose up -d.Screenshots or log output

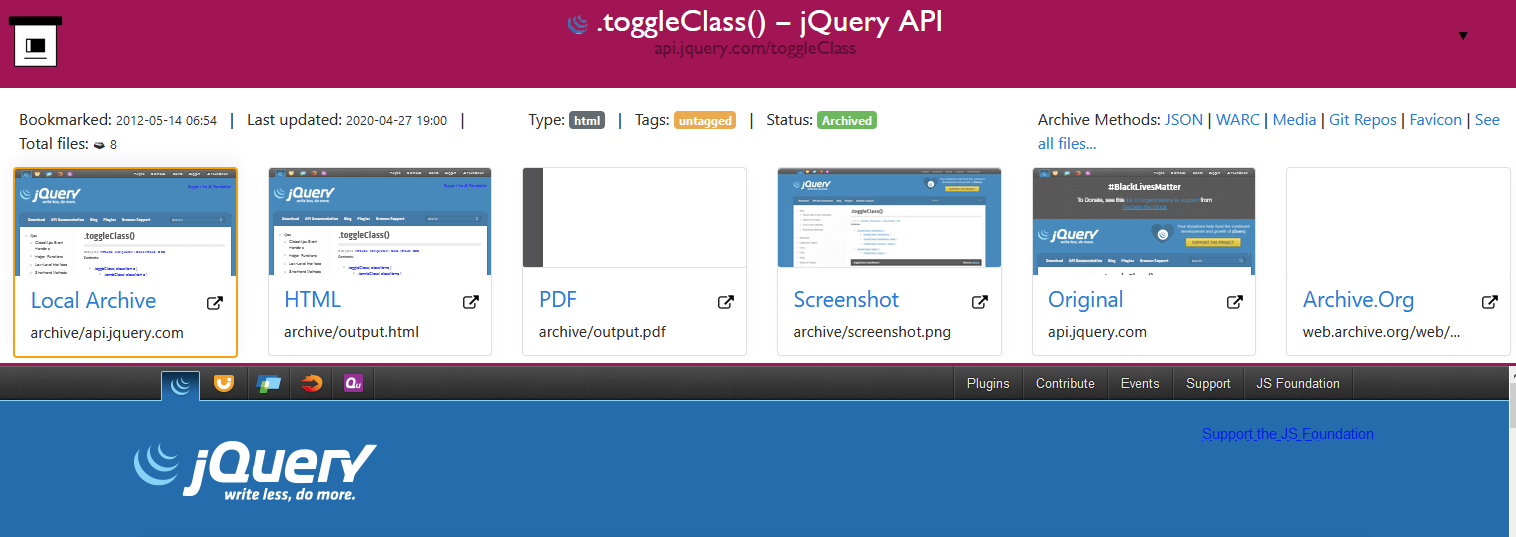

Main index:

Single-item:

ArchiveBox version

@pirate commented on GitHub (Feb 2, 2021):

So in v0.5.3,4 we switched from using the filesystem as the single-source-of-truth on archive outputs, to using the sqlite3 database.

This means that in your import from your v0.4.2 archive, it read some

link:historyentries in theindex.jsonintoArchiveResultDB rows to move the state from the filesystem into the DB.Unfortunately we didn't backtest old versions enough when we released v0.5.3, and we forgot that older archives (<

0.4.20ish) store every skipped attempt asArchiveResult(output=None, status=skipped)entries (we stopped doing that in later versions). This means that many links imported from older archives may show up with theirlatest_outputs[*]=nullfor example.It's a harmless bug from a data-safety perspective, but it has the annoying result of showing 0 files in the UI because it thinks all the outputs are

null. I think you can fix it by runningarchivebox update --extract=headers 'https://example.com/url/to/update.jpg'on each URL that's broken to re-parse all the outputs and refreshlatest_outputs, though I'll have to take a closer look.@pirate commented on GitHub (Apr 10, 2021):

Ok so the final solution I recommend to this is basically just to trigger a re-archive on all these urls that are showing up as skipped. You don't have to re-archive them from scratch, just pull the favicon or title or headers, something innocuous like that to get it to re-save the ArchiveResults to the db and you should be good to go.

Easiest way is to select them all in the UI and hit "pull title".

Give that a go on v0.6 and let me know here if you're still struggling with missing files in the UI and I'll reopen the ticket.